Introduction: The New Frontier of Open-Weights AI

In early 2026, the artificial intelligence industry reached a strategic inflection point that many analysts now describe as the “DeepSeek moment” of the trillion-parameter era. With the release of Kimi K2.5, Alibaba-backed Moonshot AI has fundamentally disrupted how frontier intelligence is built, deployed, and monetized.

For years, top-tier reasoning capabilities were locked behind proprietary APIs controlled by Western AI labs. Kimi K2.5 breaks that paradigm by delivering a 1.04 trillion-parameter open-weights model, shifting the industry away from a variable cost per task mindset toward an orchestrated workforce of autonomous digital agents.

This is not a routine model upgrade. Kimi K2.5 represents a structural evolution in AI design, execution, and economics—one that prioritizes native multimodality, parallel agent execution, and enterprise-grade autonomy over chat-centric interaction.

Why Kimi K2.5 Matters

At a strategic level, Kimi K2.5 introduces four capabilities that redefine what developers and enterprises can expect from large language models:

Native multimodality, trained on over 15.5 trillion mixed visual and textual tokens, enabling seamless cross-modal reasoning without external adapters

Agent swarm orchestration, allowing up to 100 specialized sub-agents to execute tasks in parallel

Vision-to-code intelligence, where screenshots or screen recordings translate directly into production-ready interfaces

High-density office productivity, enabling the creation of long-form reports, financial models, and technical documents end to end

To understand how a trillion-parameter system delivers this capability without prohibitive costs, we need to examine its architectural foundation.

The Architecture Behind Trillion-Parameter Efficiency

Kimi K2.5 is built on a Mixture-of-Experts (MoE) architecture, a design choice that prioritizes inference efficiency over brute-force density. Although the model contains more than one trillion parameters, only a small subset—roughly 32 billion—is activated per token during inference.

This sparse activation strategy allows Kimi K2.5 to preserve deep reasoning capacity while dramatically reducing computational overhead. The model supports an ultra-long context window of up to 256k tokens and incorporates modern architectural components such as Multi-head Latent Attention, SwiGLU activations, and the MuonClip optimizer, which stabilizes training at unprecedented scale.

Internally, computation is routed across hundreds of specialized experts, dynamically selected per token. The result is a system that behaves less like a monolithic generalist and more like a generalized specialist, adapting its reasoning pathways based on the task at hand.

Native Multimodality and the Rise of “Vibe Coding”

One of Kimi K2.5’s most consequential innovations is its native multimodal training. Unlike older AI systems that attach a vision model to a language backbone, Kimi K2.5 was trained with vision and language as a single cognitive system from the start.

This architectural choice eliminates translation loss between modalities and enables the model to reason about spatial layout, aesthetics, and interaction logic with the same fluency it applies to text.

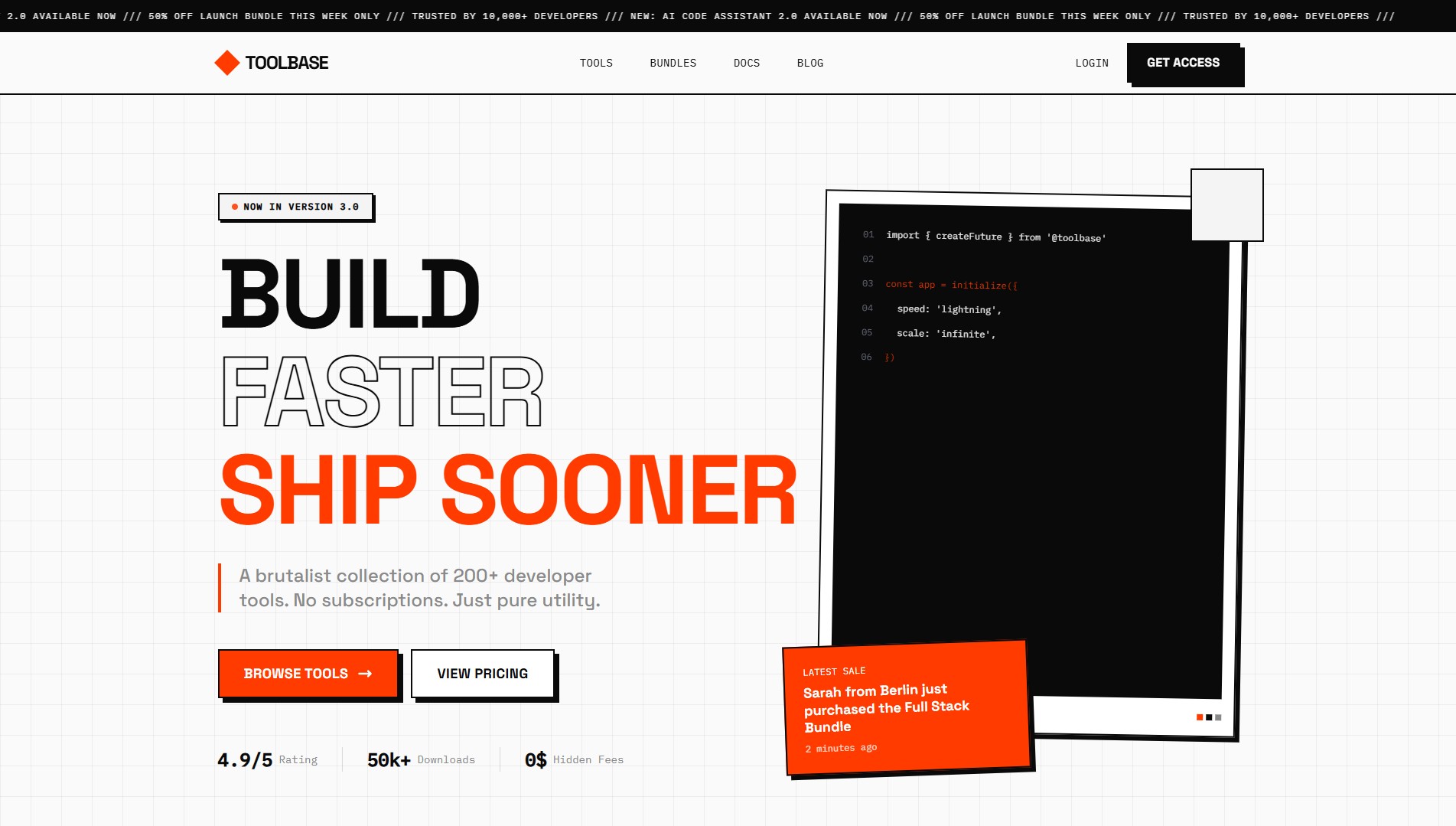

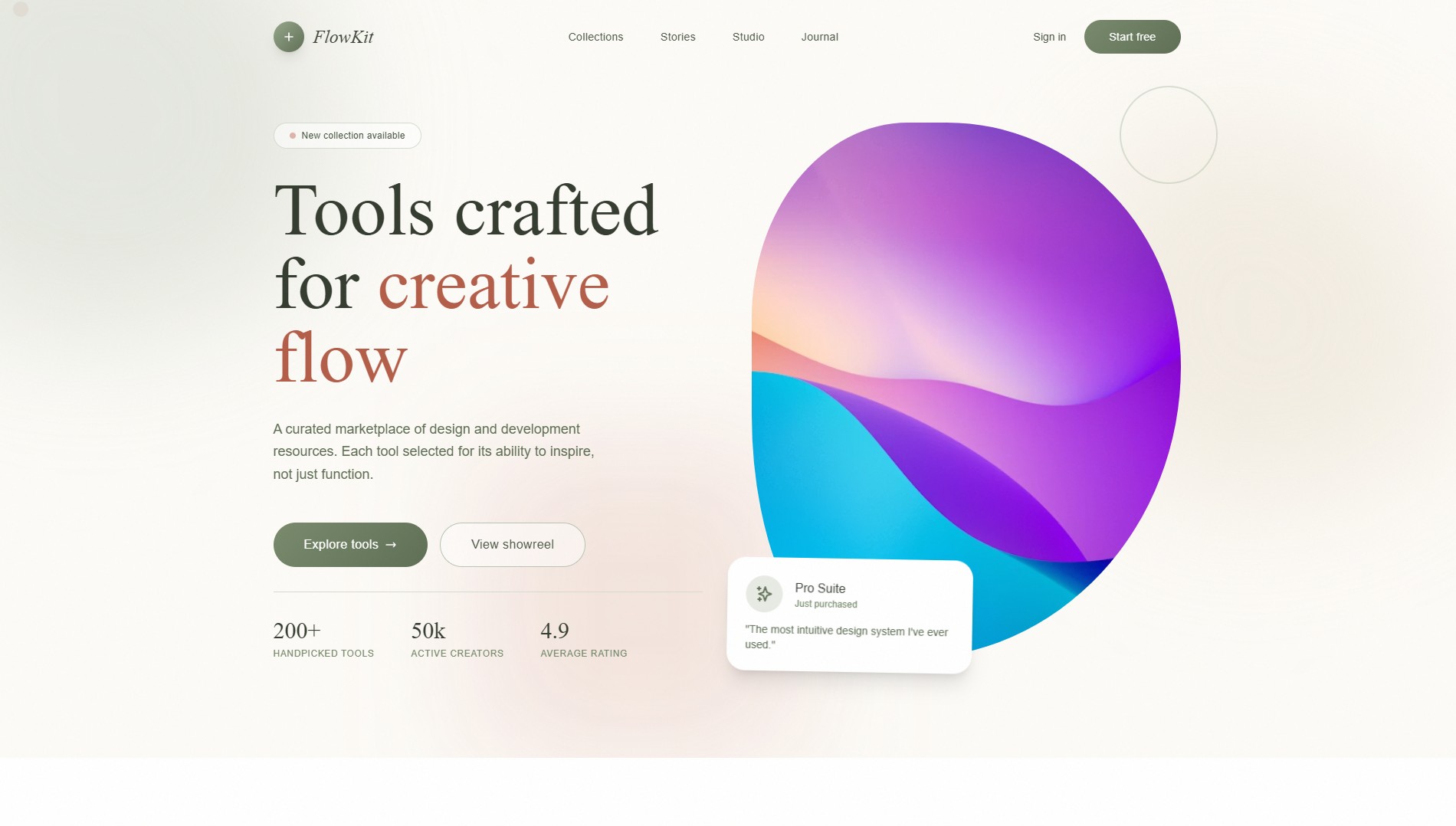

For developers, this has enabled the rise of Vision-to-Code workflows, often referred to as vibe coding. Instead of describing an interface in words, developers can simply provide a screenshot or screen recording. Kimi K2.5 reconstructs the interface as interactive, production-ready code—capturing animations, scrolling behavior, and UI intent that static tools routinely miss.

More notably, the model can visually inspect its own outputs, comparing rendered interfaces against a reference aesthetic and iteratively correcting inconsistencies. This capability pushes AI beyond generation into autonomous visual debugging.

Scaling Out: The 100-Agent Swarm Paradigm

Perhaps the most transformative capability introduced by Kimi K2.5 is its Agent Swarm architecture. Rather than operating as a single assistant, the model functions as a trainable orchestrator capable of managing a distributed workforce of AI sub-agents.

Using Parallel-Agent Reinforcement Learning (PARL), Kimi K2.5 decomposes complex objectives into parallel tasks and dynamically instantiates specialized agents—researchers, analysts, validators—to execute them concurrently.

This approach solves a common failure mode in agentic systems known as serial collapse, where models default to sequential execution despite having parallel capacity. Kimi K2.5 enforces parallelism through reward shaping and evaluates performance using a critical path metric, ensuring that new agents are spawned only when they reduce overall execution time.

In practice, this yields dramatic gains: workflows that once took hours—such as competitive analysis across dozens of websites—can now be completed in minutes through coordinated parallel execution.

Enterprise Productivity and Knowledge Work Automation

Kimi K2.5 is optimized not for conversational output, but for high-value knowledge assets. In professional environments, it can autonomously produce:

Long-form documents exceeding 10,000 words

Complex financial spreadsheets with advanced formulas

LaTeX-integrated technical PDFs

Narrative-driven presentation decks generated from raw data

In internal benchmarks, Moonshot AI reported a nearly 60 percent improvement in office productivity compared to earlier Kimi models. For legal, financial, and academic workflows—where long-context coherence and precision are critical—Kimi K2.5 offers a compelling alternative to fragmented toolchains and manual coordination.

Local Deployment and Hardware Considerations

While Kimi K2.5 is released as open weights, deploying a trillion-parameter model locally requires a realistic assessment of hardware constraints. Full-performance deployments rely on high-end GPU clusters with fast interconnects to avoid MoE routing bottlenecks.

Lower-resource configurations using aggressive quantization can run on large-memory systems, though with reduced inference speeds better suited for batch processing rather than interactive use.

From a licensing perspective, Kimi K2.5 adopts a modified MIT license, positioning it as open weights rather than strictly open source. Compared to proprietary models, this still represents a significant step toward AI sovereignty, allowing enterprises to host frontier intelligence on-premise.

Kimi K2.5 vs Proprietary Frontier Models

Moonshot AI positions Kimi K2.5 as a direct competitor to closed models such as GPT-5.2 and Claude 4.5 Opus. Across mathematics, coding, and tool-augmented reasoning benchmarks, Kimi K2.5 performs at or near state-of-the-art levels among open models, trailing proprietary leaders only marginally in select high-difficulty tasks.

The strategic trade-off is clear: slightly higher peak benchmark scores versus full control, privacy, and parallel scalability. For organizations focused on long-term AI infrastructure rather than short-term convenience, Kimi K2.5 represents a compelling alternative.

Conclusion: From Chatbots to Autonomous Orchestrators

Kimi K2.5 is more than a model release—it is a signal that the AI industry is transitioning from the chatbot era to the autonomous orchestrator era. By treating native multimodality and parallel execution as foundational capabilities, Moonshot AI has redefined what large language models are designed to do.

This is a meaningful step toward artificial general intelligence—not as a monolithic superintelligence, but as a coordinated system of agents collaborating with humans on complex, real-world work.

For developers and enterprises exploring the future of vibe coding, agentic labor, and sovereign AI infrastructure, Kimi K2.5 offers an early glimpse of what that future looks like.

Here are some of the results of one-shot prompts that we tested to see its frontend capabilities: